What is computational spectatorship?

At its simplest, computational spectatorship is a way to understand how machine seers mediate between moving images and their audiences.

OK, so what are machine seers, then?

Machine seers are techno-social systems that activate moving images as data artefacts to process them as if these data-images were being seen automatically on our behalf. The key to recognise these systems in the larger digital ecology is to ask if they appear to see for us, before us, or instead of us.

On the technological side, these systems often include machine learning techniques, such as computer vision, as well as practices like automated video annotation, algorithmic browsing, and generative machine learning models, like GANs.

On the social side, these systems are enabled by the political economy of datafication and networked computing, including the emergence of and negotiated access to large collections of digital video as a form of cultural big data.

Why cultural big data?

Machine seers only became possible with the datafication of moving images at scale. There are several definitions of big data, but one significant aspect where scholars agree is that data becomes “big” when its size requires non-standard systems to access it and process it in a meaningful way. In other words, if it fits in and can be processed with your off-the-shelf laptop, it is probably not big data. Personal computing is constantly improving, of course: the guidance computer for the Apollo programme, introduced in 1966, was almost equivalent in processing power to a Nintendo Entertainment System, a gaming console which was mass-marketed less than twenty years later. Similarly, with cheaper access to high-performance cloud computing today, one does not necessarily need a GPU farm in one’s backyard to crunch very big numbers.

These changes are significant but not as groundbreaking as one may initially think, because with great processing powers came even bigger data. According to a recent report by CISCO, by 2021 over 80% of all internet traffic will be video, if the report and the trends are to be believed, in a couple of years we will be collectively watching about one million video minutes every second (CISCO, 2018). So although somewhat elastic, this basic definition of big data still holds, and while we should be cautious to attach too much meaning to data size alone, it can serve as an initial indicator of both the dominance of moving imagery with respect to other forms of cultural-cognitive production, and of its significant computational complexity as data.

Why spectatorship?

I use the notion of spectatorship in the vein of film theorists of the Screen tradition in anglo-american film criticism, this is, as “ways of seeing” that compete against each other in an institutional background. It might initially seem like an old-fashioned choice, but in my view summoning spectatorship has at least one significant advantage: theories of spectatorship address inter-subjective systems of meaning —including technological development— without ascribing creative agency to machines, a stance against which I hold strong reservations on the grounds of its naive technological determinism. My hope is that talking about spectatorship of these machine-learned images recasts the debates about them in terms of aesthetics and not style, and in terms of political struggle instead of agency. In other words, I argue that spectatorship is a term that allows us to investigate the images produced through machine learning systems as a function of the societies that produce these systems in the first place. It is society and its economic and power relations that matter here, and machines are often used to displace or obfuscate these relations.

Can you give a concrete example?

Yes, I can. Last summer I was invited to collaborate on a project with BBC R&D to create a television programme out archive footage using different machine learning techniques. Through computational methods, 10-12 minute sequences of the BBC television archive were automatically selected, annotated, browsed and re-edited; these sequences were then introduced by a presenter and displayed alongside a dashboard-like interface intended to help the viewer understand the machine processing.

The project was conceived, produced and delivered between May and August 2018, and it aired under the category of documentary on BBC Four in September as part of a two-night experimental programming block called “AITV on BBC 4.1”. Here is an overview note by the producer, a more detailed technological note by Tim Cowlishaw, with whom I worked in this project, and below a link to fragments of a talk I gave about the project at #LDNcreativeAI last year, where I go into more detail about the techniques and how they relate to the idea of computational spectatorship.

The recording of @dchavezheras talk about Computational Spectatorship at last night’s #LDNcreativeAI at @GI_London1 in three parts. This is the first https://t.co/X30pxTheCJ

— Luba Elliott (@elluba) November 16, 2018

Beyond the many constraints that come with making a TV programme, I argue the idea for this project is essentially one of computational spectatorship: machine seers watching television for us. More than creative machinery, this project binds the value of moving images to their computational potential as large datasets, and this signals a wider change in the categories we use to classify and study moving imagery.

Can you now give an example of how computational spectatorship helps us understand these practices and their resulting imagery?

People close to digital humanities, literary studies, or computational linguistics, might recognise the BBC project as a form of “distant watching” in the Franco Moretti brand of computer-assisted literary criticism, only instead of processing thousands of books, corpora is made out of moving imagery (See: Moretti, 2013)

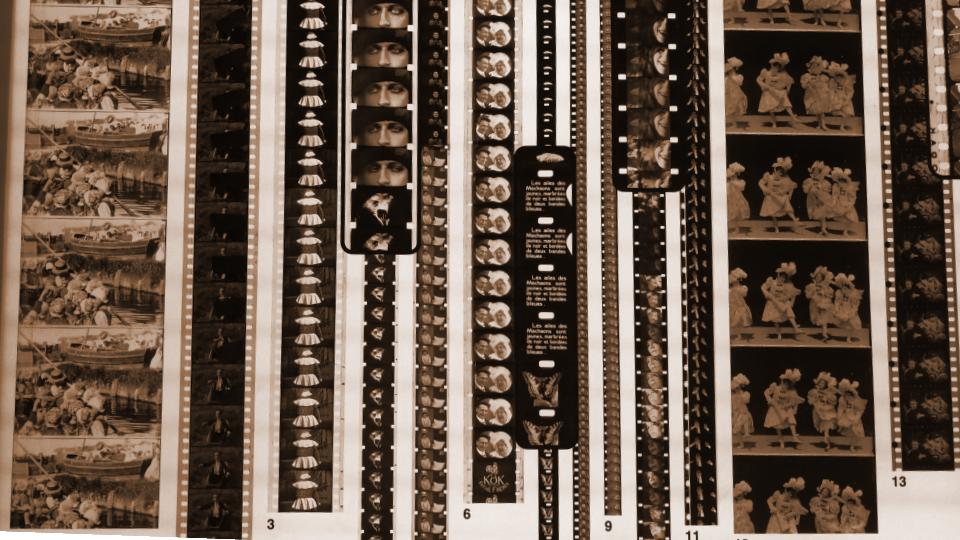

Distant watching might indeed be one of the consequences of computational spectatorship, but should not be seen as equivalent to it. In the case of film, for instance, computational spectatorship would imply the encoding of systems of spatio-temporal continuity, cinematography, performance styles, all of which have been constructed as medium conventions over time, and have slowly sedimented into larger organising structures such as films genres, and institutionalised into, for example, film archives.

Similarly, in the case of television, modalities of watching are, at least initially, structured around channels and domestic viewing. Television spectatorship is not as reliant on continuity or sustained watching, and it is therefore already more fragmentary and “flatter” than cinematic viewing, which presupposes focused attention and a form of “deeper” silent communion in a darkened auditorium where viewers are in a “collective we” that has agreed not to change the channel (See Friedberg, 2009). From this point of view, cinema elicits an aesthetic response distinct from that of television: the cinematic vis-à-vis the televisual. These modes of watching are of course in flux, in constant negotiation with each other and with older visual traditions.

Following these notions, we can trace how machine seers are designed to encode watching practices and styles, thus making automated sequencing possible. In the case of the BBC project, this sequencing invites disjunctive watching and thematic construction instead of plot-following and continuity, but modalities of automated sequencing can either smooth out the gaps in our watching or intensify them; either creating modular self-contained units of meaning organised to optimise for maximal viewing (YouTube, Netflix, or object-based media, based on prediction and personalisation), or networked and porous units of meaning, organised from a specific seed point onwards (essayistic media based on generative models, where intentionality needs to be inferred as part of the watching, this is: an avant-garde [for more on essayistic thinking in moving imagery see: Rascaroli, 2017]).

In the case of Made by Machine, the automated browsing of the archive suggests plausible narration because the machine seers make mistakes: the system misgenders people, mislabels places and objects, constantly confuses the relevance of linguistic and visual units, and these mistakes shift the path through the archive in sometimes unexpected and even humorous ways, which can seem almost like a human gesture, almost intentional. In this project machine learning is not being used to assign values of necessity, but to approximate registers of contingency; the machine’s capacity for disambiguation is being used as a negative potentiality that is consistent with narrative intentionality.

So what is the ultimate goal of computational spectatorship, what is your endgame with this?

In a very classical humanities gesture, I am tempted to say that computational spectatorship is not the answer to anything, but a way of stating the questions about machine-learned moving images. There is some truth in this way of thinking, but it is not the approach I take for my research. Computational spectatorship, the way I see it, has a much more precise intellectual agenda: its goal is to define the aesthetic conditions of machine-seeing and to explain how these conditions relate to power relations in society.

In a nod to Ryoji Ikeda, we can then say that computational spectatorship allows us to inaugurate the age of the datamatic moving image which is, unlike the cinematic or the televisual before it, the first aesthetic modality that is fundamentally computational: more than novel ways of creating, or analysing imagery, machine-seers afford us with novel ways of enjoying imagery; they fetishise calculation and turn the datafication of society into its own form of spectacle.

There might indeed by styles of image-making that come to be associated with machine learning, and it can be argued that there are novel redistributions of creative agencies through technological human-machine assemblages, but it is this spectaculum per computatio which allows us to think through these practices aesthetically: datamatic watching allows us, potentially, to enjoy sequencing without continuity, narrative without authorship and, ultimately, presence without subject. These three aspects comprise the three layers of a conjectural space: style is encoded at the lowermost layer, agency is simulated at the middle layer, and aesthetics is fully realised at the highermost level.

References

Alton, C. (2018) AI and the Archive – the making of Made by Machine. BBC R&D Blog [online]. Available from: https://www.bbc.co.uk/rd/blog/2018-09-artificial-intelligence-archive-made-machine (Accessed 27 September 2018).

Cisco, C.V.N.I., 2018. Global mobile data traffic forecast update, 2016–2021. white paper.

Cowlishaw, T. (2018) Using Artificial Intelligence to Search the Archive. BBC R&D Blog [online]. Available from: https://www.bbc.co.uk/rd/blog/2018-10-artificial-intelligence-archive-television-bbc4 (Accessed 27 September 2018).

Friedberg, A. (2009) The Virtual Window: From Alberti to Microsoft. Cambridge, Mass.: The MIT Press.

Ikeda, R. et al. (2012) Ryoji Ikeda: Datamatics. Charta.

Moretti, F. (2013) Distant Reading. 1 edition. London ; New York: Verso.

Rascaroli, L. (2017) How the Essay Film Thinks. 1 edition. New York: Oxford University Press.

Ward, J. S. & Barker, A. (2013) Undefined By Data: A Survey of Big Data Definitions. arXiv:1309.5821 [cs]. [online]. Available from: http://arxiv.org/abs/1309.5821 (Accessed 17 October 2018).

dear daniel, thx for sharing!

here few thoughts:

– I’m especially interested in how you would frame GAN in relation to computational spectatorship and GANS intersubjectivity – in my paper I’m building a framework that tries to propose a *(post)phenomenological framework to make sense of the relation between generator, discriminator and latent space as a sort of virtual screen shared between the two.

– i think there’s a great intuition in proposing to understand computational spectatorship as the transformation of a society based on datification into (to back to) a society of spectacle. i think this relationship could be greatly dig into.

– your last remark about datamatic watching allowing us to enjoy sequencing without continuity (intended as style) / narrative without authorship (intended as agency) and ultimately presence without subject (intended as aesthetic) seems very interesting but to me not really clear in the use of the wording and the different layers (lower, middle, high) you r referring to

– id be also ver interested in understanding more the distinction between agency and politics you make at the beginning and your interest in dealing with politics (or political struggle) rather then agency (even though agency comes back at the end as mentioned before), because i tend to think political struggle and agency together.

looking forward to talk more!

LikeLike